What Is Sales Forecasting and Why Do Most Teams Get It Wrong?

Sales forecasting is the process of projecting future revenue using historical data, pipeline metrics, and predictive signals. It drives budget planning, hiring decisions, territory design, and go-to-market strategy. Yet Gartner research indicates that only 7% of sales organizations achieve forecast accuracy of 90% or higher. Most teams operate in the 50-70% accuracy range, creating misalignment across finance, ops, and leadership.

The root problem isn't methodology. It's governance.

Forecasts fail when data quality is poor, ownership is unclear, and AI models lack auditability. This guide provides a governance-first playbook for building forecasts that leadership actually trusts.

Eliminate 4+ Hours Of Daily Research

Tired of spending 4+ hours daily hunting for contact info? Apollo delivers 224M verified contacts with 96% email accuracy—so your team can focus on closing. Join 550K+ companies hitting quota faster.

Start Free with Apollo →Key Takeaways

- According to Xactly, almost all (4 in 5) sales and finance leaders reported missing a quarterly sales forecast in the past year (2024), with over half missing it two or more times.

- CSO-led analytics initiatives are 2.3× more likely to achieve higher forecast accuracy than decentralized approaches.

- AI-augmented forecasting requires clear governance: model versioning, audit trails, override policies, and human-in-the-loop decision thresholds.

- RevOps alignment matters: organizations with a firmly established RevOps model are 1.4 times more likely to exceed revenue targets by 10% or more.

- The shift from manual CRM updates to automated signal capture (email, calendar, meetings) is redefining forecast inputs in 2026.

Why Sales Forecasting Accuracy Remains Low

Research from Forbes shows that in 2023, 67% of sales operations leaders believed that creating accurate sales forecasts was harder than it had been in 2020. Three structural issues drive this decline:

Data quality and governance gaps. Inconsistent pipeline definitions, missing stage exit criteria, and poor contact enrichment create noisy inputs.

When sales reps manually update deal stages without clear criteria, forecast models inherit that variance.

Ownership ambiguity. When no single leader owns forecast outcomes, accountability diffuses. CSO-led analytics teams achieve measurably better results because ownership is explicit and incentives align with accuracy targets.

AI models without governance. Teams deploy ML forecasting without versioning, audit trails, or override policies. When a model changes its prediction by 20% week-over-week, no one can explain why or roll back to a prior version.

"Apollo could be a third of the cost if you look at the full price of what we were spending on ZoomInfo, Outreach, Salesforce, and admins to make it all work."

10 Sales Forecasting Methods for 2026

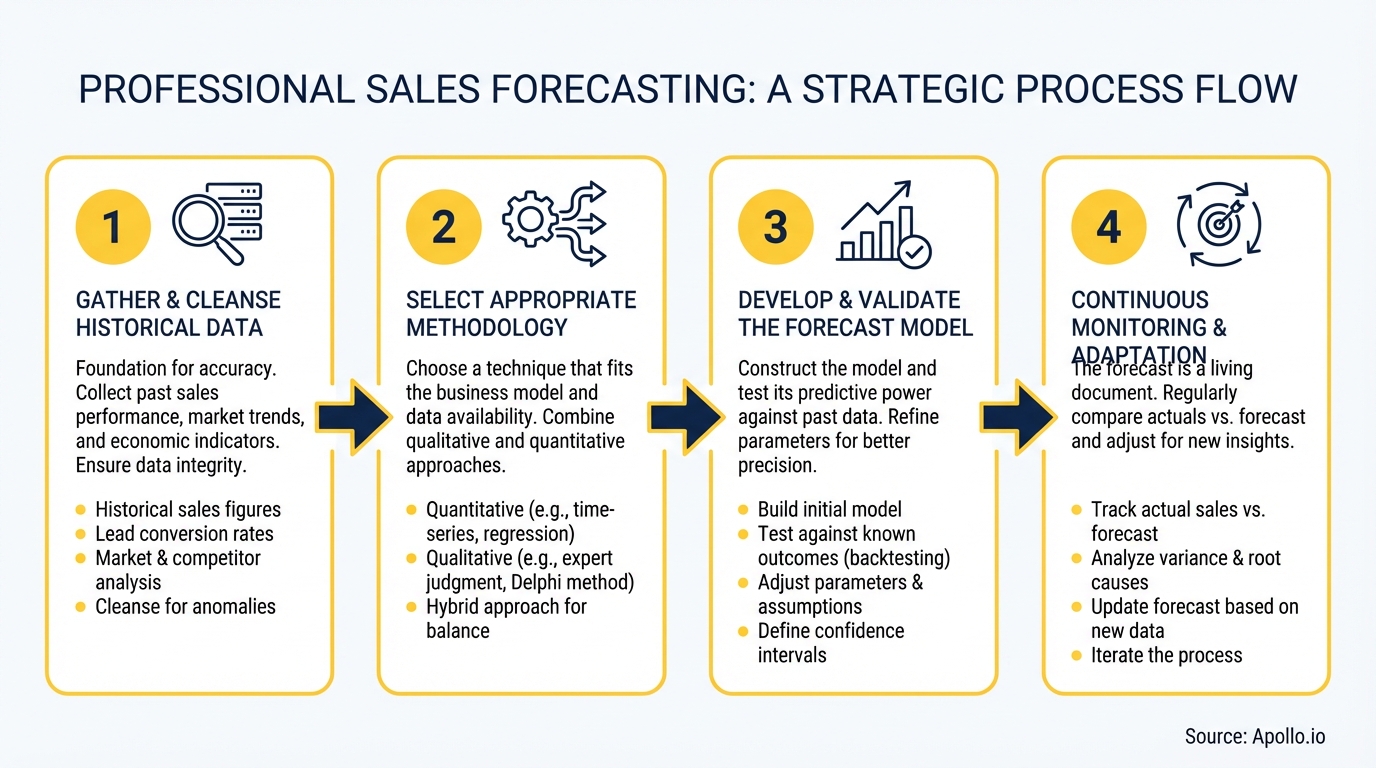

Modern forecasting combines quantitative models with qualitative judgment. Here are the most effective methods, organized by use case:

| Method | Best For | Accuracy Range |

|---|---|---|

| Historical Forecasting | Stable markets with consistent seasonality | 60-75% |

| Pipeline Forecasting | Mid-cycle visibility with weighted stages | 65-80% |

| Stage Conversion Forecasting | Stage-by-stage win rate analysis | 70-85% |

| Sales Cycle Forecasting | Time-based predictions using deal age | 65-80% |

| Regression Analysis | Multi-variable modeling (spend, leads, seasonality) | 70-85% |

| AI/ML Models | Pattern detection across high-dimensional data | 75-90% |

| Top-Down Forecasting | Market sizing and strategic planning | 50-70% |

| Bottom-Up Forecasting | Rep-level quota rollups | 60-75% |

| Time Series Forecasting | Seasonal trends (ARIMA, Prophet models) | 70-85% |

| Hybrid Approach | Combining quantitative models with rep judgment | 80-95% |

The hybrid approach delivers the highest accuracy because it layers algorithmic predictions with frontline intelligence. Reps see deal nuances that models miss. Models spot patterns reps overlook.

Struggling to build a predictable pipeline? Apollo's pipeline analytics give you real-time visibility into deal stages, rep performance, and conversion rates.

The Forecasting Operating Model: Who Owns What

Forecast accuracy improves when roles, responsibilities, and workflows are explicit. Use this RACI framework to assign ownership:

| Role | Responsible | Accountable | Consulted | Informed |

|---|---|---|---|---|

| CSO/CRO | Final forecast accuracy | Model selection | Board reporting | |

| RevOps | Model maintenance, data governance | Stage definitions | Weekly variance reports | |

| Sales Managers | Rep-level forecast reviews | Deal risk flags | Pipeline health | |

| Sales Reps | Deal stage updates, close date estimates | Buyer signals | Quota attainment | |

| Finance | Revenue recognition | Budget planning |

This model ensures that forecast accountability sits with the CSO, operational execution with RevOps, and frontline data quality with managers and reps. Learn more about aligning these functions in our Revenue Operations guide.

See Every Deal Stage In Real Time With Apollo

Forecasting unreliable because you can't see where deals actually stand. Apollo gives you real-time pipeline visibility across 224M contacts so you can predict revenue with confidence. Built-In boosted win rates 10% with Apollo's scoring.

Start Free with Apollo →Data Governance for Forecast Accuracy

Poor data quality is the top blocker to forecast accuracy. Address these four governance pillars:

1. Pipeline stage definitions. Define clear entry and exit criteria for each stage. "Discovery" begins when a champion is identified and pain is validated. It exits when a mutual action plan is documented. Ambiguous stages create forecast noise.

2. Contact and account enrichment.

Stale contact data leads to missed signals. Automate enrichment to keep job titles, company size, and firmographics current.

This ensures your forecast reflects real account health, not outdated CRM records.

3. Activity capture. Manual CRM updates lag reality. Capture email opens, meeting attendance, and response velocity automatically. These signals improve forecast timeliness and reduce rep bias.

4. Data lineage and auditability.

Track where forecast inputs originate. If a deal's close date shifts, your system should show who changed it, when, and why.

This audit trail builds trust in forecast outputs.

"Apollo enriches everything we have: contacts, leads, accounts... And we don't really have to touch it, it just works."

Need cleaner pipeline data? Apollo's data enrichment keeps your contacts and accounts current with 96% email accuracy.

AI Governance for Sales Forecasting

AI-augmented forecasting is becoming standard, but most teams skip governance. This creates model drift, unexplainable predictions, and lost trust. Implement these five AI governance practices:

Model versioning. Maintain a version history of your forecasting models. When accuracy drops, you can revert to a prior version and diagnose what changed.

Audit trails. Log every model prediction, override, and adjustment. If finance challenges a forecast, you can show exactly how the model arrived at its number.

Override policies. Define when and how humans can override AI predictions. Require managers to document their reasoning when they adjust a model's output.

Decision thresholds. Set confidence thresholds for automated actions. If a model predicts a deal will close with 85% confidence, it might auto-flag for manager review. Below 70%, it requires rep validation.

Human-in-the-loop workflows. AI should augment judgment, not replace it. Design workflows where reps and managers review model outputs and provide feedback that improves future predictions.

For more on AI governance in sales, see our guide on AI sales tools that actually close deals.

Forecast Ownership and Incentives

Forecast accuracy improves when accountability is explicit and incentives align. Here's how to structure ownership:

CSO owns the number. The Chief Sales Officer is accountable for forecast accuracy to the board. This role sets accuracy targets, reviews variance reports, and escalates persistent issues.

RevOps owns the process. Revenue Operations maintains models, enforces data governance, and produces weekly variance reports. They flag when accuracy degrades and recommend corrective actions.

Managers own rep-level reviews. Sales managers conduct weekly forecast reviews with their reps. They validate deal stages, challenge optimistic close dates, and surface risk early.

Reps own data quality. Sales reps are responsible for timely stage updates and accurate close date estimates. Tie a portion of comp to forecast accuracy to incentivize honest, timely updates.

Organizations that align incentives with forecast accuracy see measurably better outcomes. When reps know their manager will review their pipeline weekly, sandbagging and optimism bias decline.

Implementation Roadmap: Quick Wins to Long-Term Gains

Quick wins (30-60 days):

- Define clear stage entry/exit criteria and train reps

- Implement automated contact enrichment

- Launch weekly manager-led forecast reviews

- Create a forecast accuracy dashboard visible to leadership

Mid-term improvements (90-180 days):

- Deploy AI-augmented forecasting with audit trails

- Establish RACI ownership and SLAs

- Integrate activity capture (email, calendar, meetings)

- Launch a forecast accuracy incentive for reps

Long-term transformation (6-12 months):

- Build a governed AI model with versioning and override policies

- Align forecast cadence with board reporting cycles

- Create cross-functional forecast review rituals (sales, finance, ops)

- Measure and optimize forecast accuracy by segment, region, and deal type

For more on building a scalable RevOps function, see our sales performance management strategy guide.

How Apollo Powers Forecasting Teams

Apollo provides the data foundation and workflow automation that accurate forecasts require:

- Real-time pipeline analytics give you deal-level and rep-level visibility into stage progression, velocity, and conversion rates.

- Automated enrichment keeps contact and account data current, reducing forecast noise from stale CRM records.

- Activity intelligence captures email opens, meeting attendance, and response velocity to surface deal risk early.

- Sequence performance data links outreach effectiveness to pipeline creation, helping you forecast new business generation.

- CRM integrations unify GTM data across systems, eliminating data silos that degrade forecast accuracy.

Teams using Apollo report cleaner pipelines, faster deal cycles, and more predictable revenue. Learn more in our deal management product overview.

Start Building More Accurate Forecasts Today

Sales forecasting is moving from spreadsheets and rep commits to governed, AI-augmented models with clear ownership and auditability. The teams that win in 2026 will be those that treat forecasting as an operating discipline, not a quarterly ritual.

Start with governance: define roles, set data quality standards, and establish audit trails. Then layer in AI to augment human judgment.

The result is forecasts that leadership trusts and revenue teams can actually execute against.

Ready to build a more predictable pipeline? Start Free with Apollo.

Prove ROI In Weeks, Not Quarters With Apollo

Budget approval stuck on unclear metrics? Apollo delivers measurable pipeline impact from day one—quantifiable time savings and real revenue growth. Built-In boosted win rates 10% and ACV 10% with Apollo's scoring.

Start Free with Apollo →Don't miss these

Sales

Inbound vs Outbound Marketing: Which Strategy Wins?

Sales

What Is a Sales Funnel? The Non-Linear Revenue Framework for 2026

Sales

What Is a Go-to-Market Strategy? The 2026 GTM Playbook

See Apollo in action

We'd love to show how Apollo can help you sell better.

By submitting this form, you will receive information, tips, and promotions from Apollo. To learn more, see our Privacy Statement.

4.7/5 based on 9,015 reviews