How RevOps Teams Govern and Track AI SDR Performance Alongside Human Reps

The hybrid AI SDR and human SDR model is now the default operating structure for B2B revenue teams in 2026. But most RevOps leaders are tracking these two contributor types on separate dashboards with incompatible definitions — and that gap is costing them pipeline visibility and governance control. Tools like Apollo's AI Sales Assistant can run end-to-end GTM workflows from a single platform, making unified measurement more achievable. The challenge is building the governance layer around it.

According to optif.ai, 89% of revenue organizations now use AI-powered tools — up from 34% in 2023. That adoption speed has outpaced most teams' governance readiness. This article gives RevOps leaders a practical framework for tracking AI SDR performance alongside human reps on a level playing field.

Let Apollo Do the Research for You

Tired of your reps burning hours verifying contact info instead of selling? Apollo surfaces accurate, ready-to-engage prospects automatically. Join 600K+ companies turning research time into revenue.

Start Free with Apollo →Key Takeaways

- AI SDRs and human SDRs must be measured using identical outcome definitions (meeting, SAL, SQL) — not separate activity-based dashboards.

- Governance requires dual KPIs: pipeline contribution metrics and risk/data-quality metrics running in parallel.

- Data readiness is a prerequisite: poor contact data degrades AI SDR output before the first sequence sends.

- RevOps leaders should own QA rubrics and escalation protocols for AI-generated messaging — not leave it to individual managers.

- Maturity-aware benchmarking means normalizing AI SDR performance by data integration depth and team size before comparing results.

Why Do RevOps Teams Need a Governance-First Approach to AI SDR Tracking?

RevOps teams need governance-first AI SDR tracking because AI agents are both performance contributors and risk surfaces simultaneously. Without explicit policies, AI SDRs can create compliance exposure, damage brand reputation, and produce misleading pipeline metrics that distort forecasting.

revops.tools notes that RevOps is emerging as the function responsible for making AI tools coherent across sales, marketing, and customer success. That coherence starts with governance — not dashboards. Governance defines what the AI agent is permitted to do, what data it can access, and how its outputs get audited before they reach prospects.

For revenue operations teams managing hybrid AI-human pipelines, the governance model has three layers: permissioning (who can the agent message), audit trails (what did it say and when), and performance attribution (did it actually move pipeline).

How Do RevOps Teams Build a Dual Scorecard for AI and Human SDRs?

A dual scorecard measures AI SDRs and human SDRs on identical outcome definitions while also tracking AI-specific risk signals. The key principle: use the same meeting, SAL, and SQL definitions for both contributor types so comparisons are valid.

| Metric | AI SDR | Human SDR | Shared Definition |

|---|---|---|---|

| ICP-Fit Meeting | Tracked by agent source tag | Tracked by rep source tag | Booked meeting with decision maker at ICP-matching account |

| SAL (Sales Accepted Lead) | AE accepts handoff from AI sequence | AE accepts handoff from human rep | AE confirms lead meets qualification criteria within 48 hrs |

| SQL (Sales Qualified Lead) | Opportunity created from AI-sourced meeting | Opportunity created from human-sourced meeting | Opportunity stage 2+ with BANT or MEDDIC score |

| Data Quality Score | % of contacts with verified email + phone | N/A (human judgment applied) | AI-specific risk KPI |

| Bounce / Spam Rate | Monitored per sequence run | Monitored per campaign | Shared domain health threshold |

Struggling to get clean, verified contact data into your AI sequences? Enrich your CRM with Apollo's 230M+ verified business contacts before your next AI SDR rollout.

The dual scorecard should also include an attribution window. AI SDRs typically operate on shorter first-touch windows (24–72 hours for email reply) while human SDRs may have longer nurture cycles. Define these windows explicitly in your CRM before running comparisons. See how this fits into broader sales performance management frameworks.

What Data Quality SLAs Should Govern AI SDR Performance?

Data quality SLAs for AI SDRs define the minimum acceptable standards for contact data before the agent is permitted to enroll a prospect in a sequence. Without these SLAs, AI agents amplify dirty data at scale.

Research from revpack.co shows that companies with centralized data governance achieve 15% higher forecast accuracy and 20% faster decision-making. For AI SDR programs, that means RevOps must define and enforce:

- Email validity threshold: Only enroll contacts with verified email addresses (e.g., no catch-all or unverified records)

- Firmographic completeness: Account must have company size, industry, and revenue populated before AI touches it

- Deduplication rules: No contact enrolled if a duplicate exists in CRM with active opportunity or recent activity within 90 days

- Opt-out suppression: Real-time sync with unsubscribe lists before every sequence run

- Refresh cadence: Contact records flagged for re-enrichment if not updated within a defined period

Apollo's Outbound Copilot shows credit cost transparency before each run, giving RevOps a natural enforcement checkpoint for data quality gates. Pair this with sales transformation practices that embed data governance into every workflow step.

How Should RevOps Leaders Benchmark AI SDR Performance by Team Maturity?

RevOps leaders should benchmark AI SDR performance relative to their team's data maturity and integration depth — not against universal industry averages. A team with shallow CRM hygiene and no enrichment pipeline will see different AI SDR outputs than one with a fully unified data layer.

A practical three-tier maturity model:

| Maturity Tier | Characteristics | Primary AI SDR KPIs |

|---|---|---|

| Tier 1 (Early) | CRM partially populated, manual enrichment, no deduplication | Sequence completion rate, bounce rate, data fill % |

| Tier 2 (Developing) | Automated enrichment, basic intent signals, partial integration | Reply rate, meeting booked rate, SAL conversion |

| Tier 3 (Mature) | Unified data layer, intent + firmographic scoring, full audit trail | Pipeline sourced, win rate downstream, cost per SQL |

Tier 1 teams should focus governance effort on data readiness before expanding AI SDR volume. Tier 3 teams can run controlled A/B holdout experiments comparing AI-only, human-only, and hybrid cohorts. For context on scaling this framework, see how sales analytics drives revenue growth.

Turn Funnel Guesswork Into Qualified Pipeline

Pipeline forecasting a guessing game because leads stall before they ever become opportunities? Apollo surfaces high-intent prospects so your reps engage buyers who are actually ready. Nearly 100K paying customers stopped forecasting on hope.

Start Free with Apollo →How Do Sales Managers Run QA and Coaching Workflows for AI SDR Messaging?

Sales managers govern AI SDR messaging quality by reviewing a sample of AI-generated sequences weekly using a structured rubric, then escalating exceptions before they reach prospects at scale.

A practical manager QA rubric scores each AI-generated message on:

- ICP relevance: Does the message reference the prospect's actual role, company stage, or pain point?

- Value prop accuracy: Is the product positioning consistent with the approved AI Content Center configuration?

- Tone compliance: Does the message match brand voice guidelines?

- Call-to-action clarity: Is there one clear next step?

- Compliance flags: No unverified claims, no suppressed contact types

Escalation protocol: any message scoring below threshold gets flagged to RevOps for sequence-level pause — not just individual message edit. This prevents a bad prompt or misconfigured Content Center from sending at volume. Tory Kindlick, Head of Revenue Ops at RapidSOS, describes this kind of AI-assisted workflow: "Apollo's AI Assistant makes me look like one. My colleagues think I'm some kind of AI prodigy, when really I'm just chatting with the Assistant and letting it bring my ideas to life."

What Tools and Integrations Support AI SDR Governance at Scale?

Effective AI SDR governance requires a unified platform where prospecting data, sequence execution, and performance reporting share the same data layer — not separate tools stitched together.

The core integration requirements for a governance-ready AI SDR stack:

- Bidirectional CRM sync: Every AI-touched contact updates in real time; no orphaned records

- Enrichment pipeline: Automated contact verification before enrollment, not manual spot-checks

- Sequence audit logs: Full history of what the AI sent, to whom, and when — exportable for compliance review

- Role-based access controls: Managers can view and pause AI sequences; reps cannot override suppression lists

- Scoring integration: AI lead scores (like Apollo's Scores) gate which accounts the AI agent can prospect

Spending too much time managing five separate tools to run your AI SDR program? Apollo's AI sales automation consolidates prospecting, sequencing, and analytics into one governed workspace — so RevOps has a single source of truth for both AI and human SDR performance.

For teams building or auditing their full stack, the sales tech stack playbook covers how to structure integrations that scale without adding governance complexity.

How Should RevOps Leaders Get Started with AI SDR Governance in 2026?

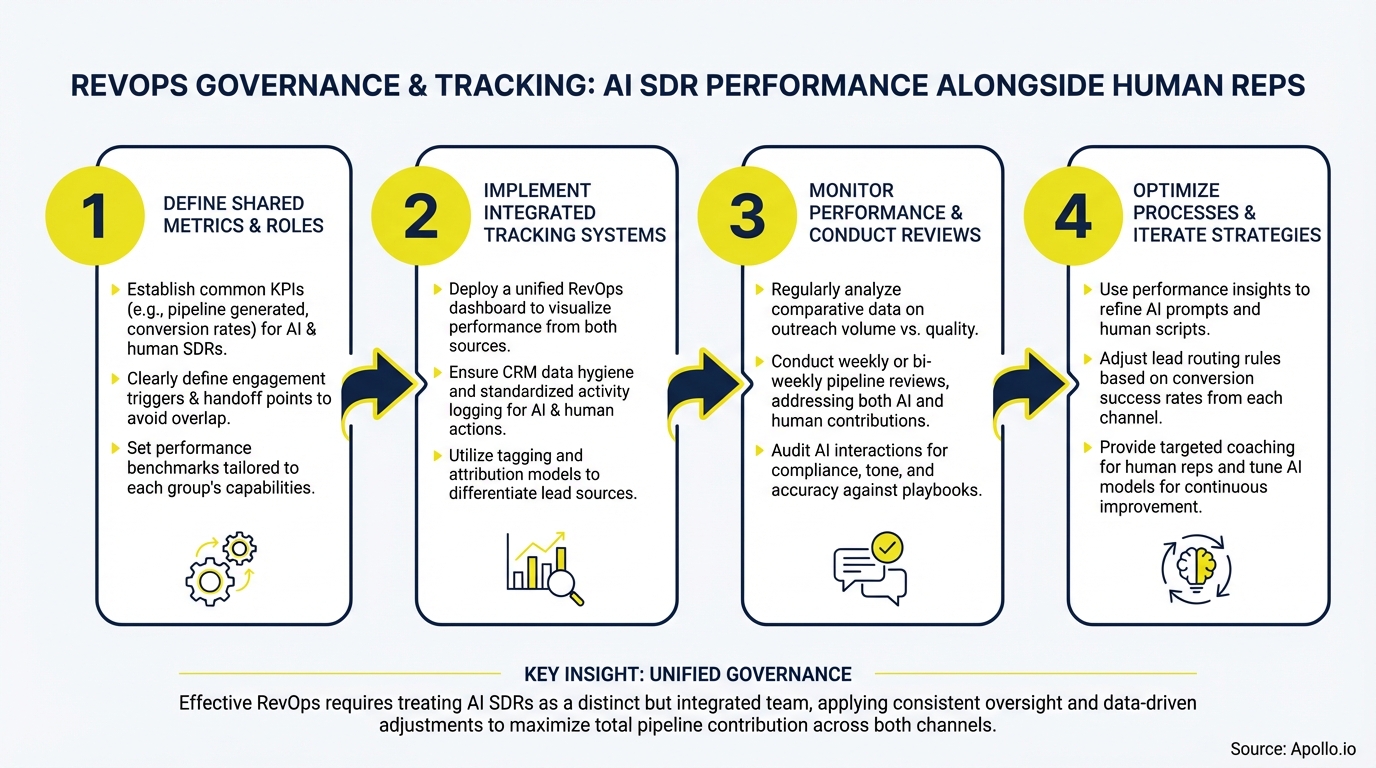

RevOps leaders should start AI SDR governance by locking in three things before scaling volume: shared definitions, data quality gates, and a manager QA cadence. Everything else builds on that foundation.

A 30-day launch checklist:

- Define meeting, SAL, and SQL in your CRM so both AI and human SDR contributions use identical criteria

- Set minimum data quality thresholds (email validity, firmographic completeness) as enrollment gates

- Configure your AI Content Center with approved value props, ICP pain points, and brand voice guidelines

- Assign a manager to run weekly message QA reviews using a structured rubric

- Build side-by-side dashboards comparing AI SDR and human SDR on shared outcome KPIs

- Run a 30-day A/B holdout experiment: AI-only vs. human-only on matched account segments

Governance is what separates AI SDR programs that compound over time from ones that create liability. With the right framework, RevOps can give both AI agents and human SDRs clear accountability — and give leadership the visibility they need to make confident decisions about where to invest. Ready to build a governed, high-performance AI outbound program? Request a Demo and see how Apollo's unified platform supports RevOps governance from data to pipeline.

Prove Pipeline ROI With Apollo

ROI pressure killing your tool budget approval? Apollo delivers measurable pipeline impact your leadership can see — fast. Teams like Leadium 3x'd annual revenue after making the switch.

Start Free with Apollo →Don't miss these

Data

How to Structure a Marketing Team That Actually Drives Revenue in 2026

Data

Solving Data Synchronization Headaches Across Multiple Business Systems

Data

What Marketing Metrics Should B2B Teams Track in 2026?

See Apollo in action

We'd love to show how Apollo can help you sell better.

By submitting this form, you will receive information, tips, and promotions from Apollo. To learn more, see our Privacy Statement.

4.7/5 based on 9,015 reviews