How to Pilot an Agentic GTM Motion Before Full Rollout

Most agentic GTM projects fail not because the AI is bad, but because the pilot had no real success criteria. Before you commit to a full rollout, you need a structured 6–10 week test with explicit kill/scale gates, a readiness baseline, and direct revenue linkage. According to Landbase, 79% of organizations report some level of AI agent adoption as of 2025, with 96% planning to expand usage — yet most are still figuring out how to prove value before scaling.

This guide gives you a practitioner-ready blueprint: a readiness checklist, a week-by-week pilot playbook, a scorecard with kill/scale criteria, and an ROI measurement framework. Whether you're a RevOps leader, a demand gen director, or a founder building your first outbound motion, this is how you pilot an agentic GTM motion responsibly. For context on how your broader GTM strategy should be structured before you layer in agents, start there.

Scale Smarter With Apollo's AI Engine

Tired of your reps burning hours on manual research instead of closing deals? Apollo surfaces verified contacts and automates outreach so your team scales without the chaos. Join 600K+ companies building predictable pipeline.

Start Free with Apollo →Key Takeaways

- Start with a single "workflow wedge" (one job-to-be-done) that is measurable, sandboxable, and low-risk before touching pricing or forecasting workflows.

- Treat agent readiness as a formal workstream: data access, permissions, taxonomy, and analytics instrumentation must be validated before the pilot begins.

- Build explicit kill/scale criteria into your scorecard from day one so the decision to proceed or stop is evidence-based, not political.

- Link pilot outputs directly to revenue signals (MQL to SQL conversion, influenced pipeline, win-rate lift) — not just productivity metrics.

- Run a vendor bake-off (agent vs. human + copilot) with a defined acceptance threshold to avoid being misled by "agent-washing" marketing claims.

What Is an Agentic GTM Motion, and Why Does It Need a Pilot?

An agentic GTM motion is a go-to-market workflow where AI agents autonomously execute multi-step tasks — such as account research, outbound personalization, lead follow-up, or CRM updates — with minimal human intervention at each step. Unlike standard automation, agents make decisions, use tools, and chain actions together based on goals, not just triggers.

A pilot is non-negotiable because the failure rate is high. Gartner predicted at least 30% of GenAI projects would be abandoned after proof of concept, driven by poor data quality, inadequate risk controls, escalating costs, or unclear business value. A structured pilot is the mechanism that separates projects that survive from those that get cut. For RevOps leaders, this also connects directly to how revenue operations drives growth — agents only add value when they're embedded in a functioning GTM system.

How Do You Assess Readiness Before Starting a Pilot?

Readiness assessment covers four domains: data quality, systems access, governance, and analytics instrumentation. Without these baselines, your pilot will produce unreliable results that can't be attributed to the agent's performance.

| Domain | Readiness Check | Remediation If Not Ready |

|---|---|---|

| Data Quality | CRM contacts enriched, no >15% bounce rate | Run a data enrichment pass before launch |

| Systems Access | Agent has API access to CRM, inbox, and calendar | Provision sandbox credentials with scoped permissions |

| Governance | Approval workflow and escalation path defined | Assign a human-in-the-loop reviewer role |

| Analytics | Tracking events fire for each agent action | Instrument events before week 1 of pilot |

A Gartner survey of 413 martech leaders found 50% said they lacked the technical and data stack readiness required for AI agent deployment. Build a remediation backlog from this checklist and clear it before you start the clock on your pilot timeline. Struggling to ensure your contact data is clean enough for agents to act on? Enrich your B2B contacts with Apollo's 230M+ verified records before your pilot begins.

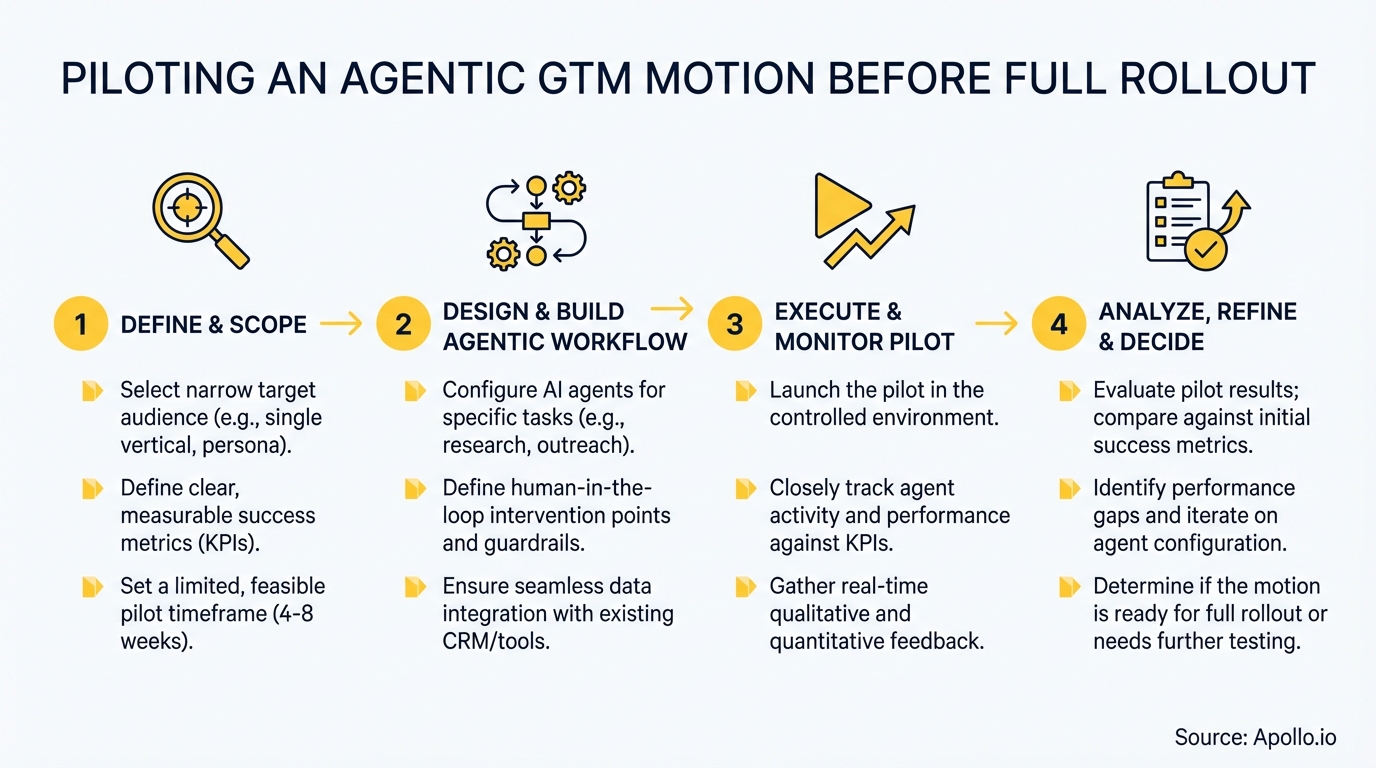

What Does a 6–8 Week Agentic GTM Pilot Playbook Look Like?

A successful pilot runs in three phases: setup and instrumentation, controlled execution, and evaluation. Each phase has a defined gate that must be passed before proceeding.

| Phase | Weeks | Activities | Gate Criteria |

|---|---|---|---|

| Setup | 1–2 | Readiness audit, sandbox provisioning, define success metrics, select workflow wedge | All readiness checks pass; metrics baselined |

| Execution | 3–6 | Agent runs on test segment; human reviewers QA outputs; A/B comparison tracked | Agent accuracy ≥ threshold; no compliance flags |

| Evaluation | 7–8 | Scorecard completed; kill/scale decision presented to stakeholders | Revenue signal movement in test segment |

The most effective starting wedge is outbound personalization or lead follow-up. These are measurable, sandboxable, and don't touch pricing or forecasting. Research from G2 shows 71% of B2B marketers use generative AI weekly, primarily for content creation — which confirms this is the highest-frequency, most measurable use case to start with. Your pilot's content outputs should connect directly into your B2B marketing funnel so attribution is clean.

Turn Funnel Guesswork Into Pipeline Clarity

Pipeline forecasting a guessing game because marketing leads never convert? Apollo surfaces verified, in-market prospects so every rep works opportunities worth closing. Nearly 100K paying customers stopped forecasting blind.

Schedule a Demo →How Do RevOps Leaders Build a Pilot Scorecard with Kill/Scale Criteria?

RevOps leaders should anchor the scorecard to three outcome categories: efficiency, quality, and revenue impact. Each category needs a defined threshold for "scale," a warning zone, and a kill trigger.

| Outcome Category | Scale Signal | Kill Signal |

|---|---|---|

| Efficiency | Agent completes task faster than human baseline with fewer revisions | Revision rate exceeds human baseline by >20% |

| Quality | QA pass rate ≥ defined threshold; zero compliance flags | Any compliance violation or >10% factual error rate |

| Revenue Impact | MQL→SQL or reply rate improves in test segment vs. control | No measurable pipeline movement after 6 weeks |

Gartner found 45% of martech leaders say vendor-offered AI agents fail to meet promised performance expectations. The scorecard protects you from sunk-cost pressure by making the kill decision a pre-agreed, evidence-based trigger — not a judgment call. For demand gen leaders, tie scorecard results directly to your demand generation benchmarks so the pilot output is comparable to existing channel performance.

How Do You Run a Vendor Bake-Off to Avoid Agent-Washing?

A vendor bake-off compares the AI agent against a human-plus-copilot baseline on the same set of "golden tasks" to determine whether the agent actually outperforms the current workflow. Many tools marketed as agentic are closer to workflow automation, so controlled testing is the only reliable evaluation method.

Bake-off protocol:

- Define 10–20 golden tasks representing your pilot workflow wedge (e.g., personalize 20 outbound emails for a specific ICP segment).

- Have the agent complete the tasks; have a human rep complete the same tasks with their current tools.

- Score both outputs on accuracy, compliance, time-to-complete, and downstream conversion rate.

- Set an acceptance threshold (e.g., agent must match or beat human on at least 3 of 4 criteria) before week 6.

- Document failure modes: hallucinations, off-brand tone, missing personalization signals, or tool access errors.

The current trend toward "pilot to production" as a governance problem means your bake-off should also evaluate the agent's observability: does the vendor provide audit trails, escalation paths, and human-in-the-loop controls? If not, that's a structural risk, not just a capability gap. This also applies when you're evaluating how to build a sales tech stack — consolidation matters as much as capability.

How Do You Link Agentic Pilot Outputs to Revenue?

Connect agent outputs to revenue by designing a simple control-group experiment from the start of the pilot. Split your target account list into a test segment (agent-assisted) and a control segment (human-only), then measure MQL-to-SQL conversion rate, reply rate, pipeline influenced, and win rate across both groups over the pilot window.

According to Devrix, 93% of GTM teams already use AI in some form — which means your pilot isn't proving whether AI works in general, but whether this specific agent workflow moves your specific revenue metrics. Keep the experiment clean: don't change sequences, messaging, or ICP targeting in the control group during the pilot window.

Unit economics matter here too. With usage-based and credit-based pricing now common across agentic platforms, track cost per qualified meeting and cost per opportunity influenced alongside revenue metrics. If agent spend scales faster than pipeline, that's a kill signal regardless of reply rate improvements. Spending too much time managing disconnected tools instead of acting on pipeline signals? Run your entire GTM motion from one platform with Apollo.

How Do You Move From a Successful Pilot to Full Rollout?

Moving from pilot to production requires three operationalization artifacts: a content taxonomy (defining the asset types, personas, and ICP segments the agent will serve), a workflow redesign document (mapping which human steps are replaced, supervised, or unchanged), and a change-management plan for the reps or marketers whose workflows shift.

SDRs and BDRs are often the first to feel the change. The transition works best when agents handle account research, first-draft personalization, and CRM data entry, while reps focus on conversation quality and relationship depth. This aligns with how sales automation works best in practice: AI handles volume and consistency; humans handle judgment and trust.

Scale criteria from your scorecard become your rollout gating conditions. Don't expand to new segments, channels, or use cases until the pilot segment has hit all three outcome thresholds for at least two consecutive weeks.

Premature scaling is the most common reason successful pilots become failed rollouts.

Start Your Agentic GTM Pilot the Right Way

Piloting an agentic GTM motion is a governance and measurement challenge before it's a technology challenge. The teams that succeed define their workflow wedge narrowly, instrument their data stack before day one, run a bake-off with real acceptance criteria, and tie every output back to pipeline movement.

Apollo gives GTM teams the unified platform to run this pilot without adding more tools to your stack. From verified contact data powering your agent's inputs to AI-powered sales automation that consolidates prospecting, engagement, and pipeline tracking in one workspace, Apollo is built for exactly this kind of structured, measurable rollout. As Cyera put it: "Having everything in one system was a game changer."

Start Prospecting and build your agentic GTM pilot on a foundation of clean data, unified tooling, and measurable revenue outcomes.

Prove Pipeline ROI With Apollo

ROI pressure killing your next budget approval? Apollo delivers measurable pipeline impact from day one — no guesswork, no slow ramp. Leadium 3x'd annual revenue. Your board wants proof. Apollo gives you numbers.

Schedule a Demo →Don't miss these

Sales

Inbound vs Outbound Marketing: Which Strategy Wins?

Sales

What Is a Sales Funnel? The Non-Linear Revenue Framework for 2026

Sales

What Is a Go-to-Market Strategy? The 2026 GTM Playbook

See Apollo in action

We'd love to show how Apollo can help you sell better.

By submitting this form, you will receive information, tips, and promotions from Apollo. To learn more, see our Privacy Statement.

4.7/5 based on 9,015 reviews