The Lead Scoring Mistake Chief Revenue Officers Make Every Quarter

Your marketing team celebrates MQL volume while your sales team complains about lead quality. Your forecast accuracy drops every quarter because you can't predict which opportunities will actually close. Apollo.io breaks down what chief revenue officers need to fix about their lead scoring systems before they lose another quarter to misaligned priorities.

Key Takeaways

- Lead scoring drift happens when your models use outdated customer profiles, audit your criteria against closed deals from the last 90 days, not last year's ideal customer profile

- Single-score systems hide critical buying signals, track separate scores for fit, engagement, and intent so reps know why a lead matters, not just that it does

- Real-time scoring changes who gets worked first, static monthly refreshes mean your team chases cold leads while hot prospects wait in the queue

- Transparency drives adoption, if reps can't see why a lead scored high, they won't trust the system and will revert to gut instinct

- Scoring without routing is just reporting, connect scores directly to assignment rules and service level agreements or nothing changes operationally

What revenue problems does broken lead scoring actually create?

Broken lead scoring creates a silent tax on your revenue engine. Your reps waste time on leads that were never going to convert while high-intent prospects sit untouched in the queue.

Here's what happens when scoring fails:

Your marketing and sales teams operate with completely different definitions of "qualified." Marketing celebrates hitting their MQL target. Sales complains that 60% of those MQLs are junk, wrong industry, wrong title, wrong budget. Nobody agrees on what "ready to buy" actually means, so you're measuring activity instead of outcomes. According to VolkartMay research, implementing lead scoring can improve conversion rates by 20%+ for sales teams, but only if both teams agree on the scoring criteria.

Your forecast is built on wishful thinking because you can't distinguish real pipeline from noise. Every opportunity looks the same in your CRM until it suddenly falls apart.

You're flying blind on which deals deserve executive attention and which ones are stalled because the lead was never properly qualified in the first place. Your pipeline review meetings turn into arguments about "why didn't we see this coming?"

Your capacity planning is guesswork. You don't know how many leads each rep can actually handle because you're measuring quantity ("we generated 500 MQLs") instead of quality ("we generated 50 leads that match our ideal customer profile and showed buying intent").

You either overwork your best reps by flooding them with garbage or underutilize them by being too conservative with assignments.

Your speed-to-lead metrics are meaningless. You're tracking time-to-first-touch on all leads equally, which means reps are incentivized to burn through the queue quickly rather than focus on the prospects most likely to close.

The leads that should get worked within minutes sit for hours while reps chase down dead ends.

What does effective lead scoring architecture look like?

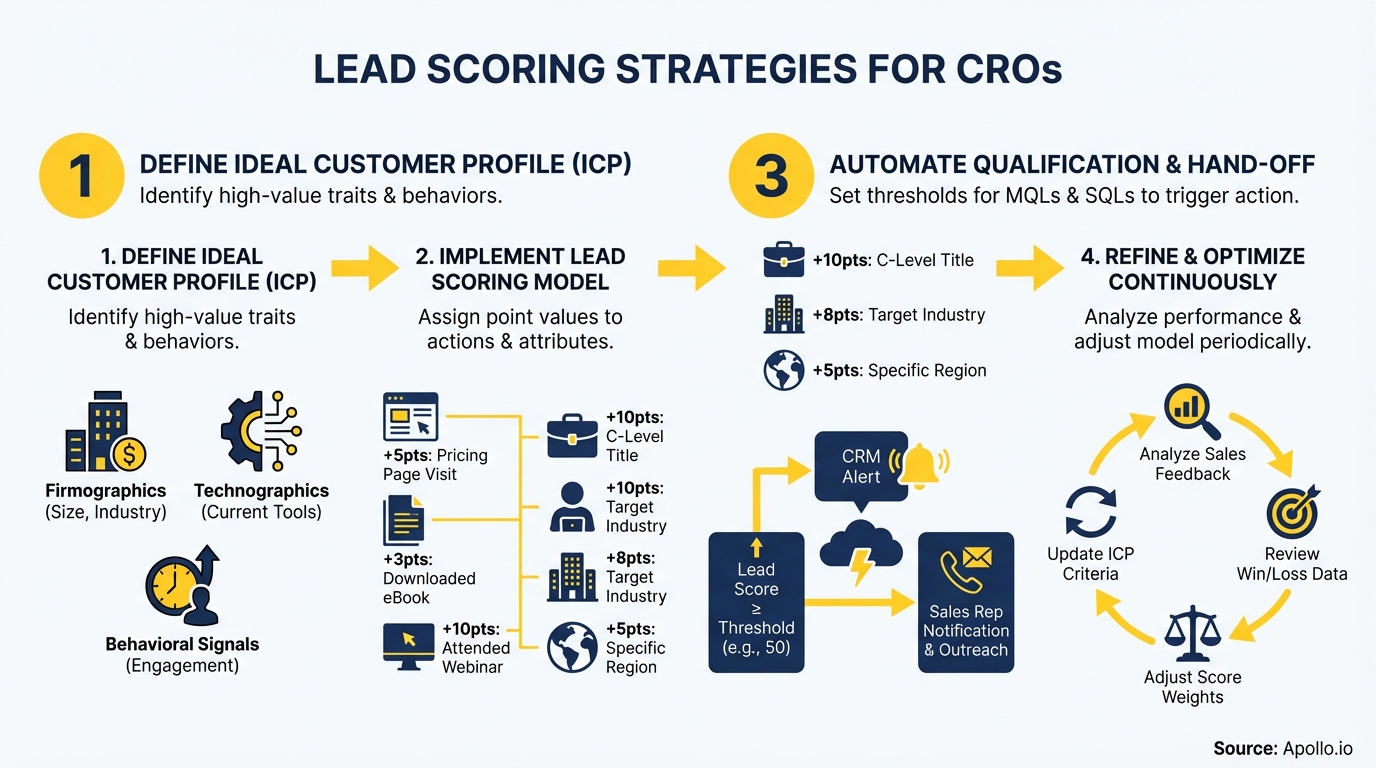

Effective lead scoring isn't a single number, it's an operating system for your revenue engine. You need multiple scores that tell different parts of the story, real-time updates that reflect current behavior, and tight integration with routing so scoring actually changes who gets worked when.

Start with separate scores for fit, engagement, and intent. Fit scoring tells you if this prospect matches your ideal customer profile, industry, company size, revenue, tech stack, whatever correlates with your best customers.

Engagement scoring tracks how this specific contact interacts with you, email opens, website visits, content downloads, demo requests. Intent scoring surfaces buying signals from third-party data, are they researching solutions like yours, comparing vendors, visiting pricing pages?

A single composite score hides critical information. A prospect might have perfect fit but zero engagement (they're a great target but don't know you exist).

Another might have high engagement but terrible fit (they're interested but will never buy). A third might have mediocre fit but extremely high intent (they're actively shopping right now).

Each scenario requires a different sales motion, and your team needs to see all three dimensions.

Build your scoring models using actual closed deals, not theoretical ideal customer profiles. Pull every deal you closed in the last 90 days and identify the common attributes, what industries, what company sizes, what titles, what engagement patterns. Then pull every deal you lost and look for anti-patterns, what characteristics predict failure? Your scoring criteria should reflect reality, not aspiration. Research from SuperAGI shows that 75% of B2B companies had adopted AI for lead scoring by 2025, leading to a 25% improvement in lead quality and a 15% increase in conversion rates.

Make scoring transparent and auditable. Every rep should be able to click into a lead and see exactly why it scored the way it did, which criteria it matched, which ones it missed, how recent the data is.

Opacity kills adoption. If reps don't trust the scores, they'll ignore them and revert to working leads alphabetically or by submission time.

Connect scoring directly to routing and service level agreements. High-score leads should trigger immediate assignment and tighter SLAs, maybe 5-minute response time for leads that hit 80+ on fit, engagement, and intent.

Medium-score leads get assigned but with looser SLAs, maybe same-day response. Low-score leads might go into nurture instead of direct sales outreach.

Scoring without operational consequences is just reporting.

What metrics tell you if lead scoring is actually working?

Track conversion rates by score tier, not just overall volume. You need to know if high-scoring leads actually convert at higher rates than low-scoring leads.

If your 80+ score leads convert at the same rate as your 40-score leads, your model is broken.

Measure the metric tiers specifically: MQL-to-SQL conversion, SQL-to-opportunity conversion, and opportunity-to-closed-won conversion. Break each one down by score tier.

If high-scoring MQLs convert to SQL at 60% but medium-scoring MQLs convert at 55%, your model isn't differentiated enough. You want to see dramatic separation, high-scoring leads should convert at 2-3x the rate of medium-scoring leads.

Monitor score drift over time. Pull your scoring model criteria and compare them against your closed deals every quarter. If your model says "director-level and above" but 40% of your recent wins were manager-level, your fit scoring is outdated. If your model prioritizes whitepapers downloads but recent wins all attended webinars, your engagement scoring needs recalibration. According to SaaS & Co benchmarks, predictive lead scoring specifically can deliver a 40%+ improvement in conversion rates when models stay current with actual buying behavior.

Track speed-to-contact by score tier, not just overall averages. Your high-scoring leads should be contacted significantly faster than low-scoring leads, if they're not, your routing isn't working or your reps don't trust the scores.

You want to see something like: 80+ score leads contacted in under 10 minutes, 60-79 score leads contacted same-day, 40-59 score leads contacted within 48 hours.

Measure rep adherence to scored priorities. Are reps actually working high-scoring leads first, or are they cherry-picking based on personal preferences?

If your CRM shows high-scoring leads sitting untouched while reps chase low-scoring leads, you have an adoption problem, either the scores aren't trustworthy or the workflow isn't enforced.

Calculate revenue per lead by score tier. The ultimate question: do high-scoring leads generate more revenue?

Track average deal size and win rate for each score bucket. If high-scoring leads close faster and at higher values, your scoring is working.

If there's no revenue difference between score tiers, you're wasting time on a scoring system that doesn't predict outcomes.

What questions should you ask when evaluating lead scoring solutions?

Start with model flexibility and transparency. Ask: Can we build multiple scoring models for different segments or use cases?

Can reps see exactly why a lead received a specific score? Can we adjust scoring criteria without needing engineering support?

Can we test model changes on a subset of leads before rolling them out?

Push on data freshness and enrichment. Ask: How often do scores refresh, real-time, hourly, daily?

What happens when underlying data changes (job title, company size, etc.)? Do you enrich leads automatically or do we need a separate data provider?

How do you handle data quality issues like duplicates, outdated information, or missing fields?

Dig into integration and automation. Ask: How does scoring connect to our CRM and marketing automation platform?

Can scores trigger routing rules and assignment changes automatically? Can we use scores in email sequences, calling campaigns, and other workflows?

What happens if the integration breaks, do scores stop updating or do we lose historical data?

Challenge the vendor on model performance and decay. Ask: How do you measure whether a scoring model is working?

What baseline metrics should we expect for MQL-to-SQL conversion by score tier? How do you detect model drift?

What's your recommendation for how often we should recalibrate our criteria? Can you show examples of customers whose models stopped working and how you fixed them?

Get specific about adoption and change management. Ask: What's the typical rep adoption rate in the first 90 days?

What are the most common reasons reps ignore scores? What training and enablement do you provide?

How do you help leadership enforce score-based prioritization? Can you share examples of customers who struggled with adoption and what they did to fix it?

How do you make the final lead scoring decision?

Run a pilot with a subset of your team before committing to a full rollout. Pick 5-10 reps, set up scoring for a single segment, and measure results for 60 days.

Track MQL-to-SQL conversion, time-to-contact, and rep feedback. If scores don't improve conversion rates or if reps actively fight the system, fix the model before expanding.

Define success metrics upfront and hold the vendor accountable. You should see measurable improvement in conversion rates, faster speed-to-contact for high-priority leads, and better forecast accuracy within the first quarter.

If those metrics don't move, either the scoring model needs recalibration or the solution isn't the right fit.

Build a quarterly scoring review into your revenue operations calendar. Scoring models degrade over time as your ideal customer profile evolves, your product changes, and market conditions shift.

Set a recurring meeting with sales leadership, marketing, and revenue operations to review model performance, adjust criteria, and recalibrate weightings. Treat scoring like financial forecasting, it requires ongoing maintenance, not a one-time setup.

Ensure executive alignment on what "qualified" actually means before implementing any scoring system. If your chief marketing officer is incentivized on MQL volume and your sales leaders are incentivized on pipeline value, lead scoring will just formalize the conflict instead of resolving it.

Get agreement on conversion rate targets, acceptable pass-back rates, and what happens when scores and rep judgment disagree.

Tie scoring to compensation and capacity planning so it changes behavior. If reps are still paid based on total activity volume rather than high-quality conversions, they'll ignore scores and work through the queue sequentially.

If you're still assigning leads round-robin without considering rep capacity or specialization, scoring won't improve outcomes. The operating model has to support score-based prioritization, or the technology becomes shelfware.

Share this post

Start using Apollo today

Start your free trial with Apollo today—then use these resources to guide you through every step of the process.

Start using Apollo today

Start your free trial with Apollo today—then use these resources to guide you through every step of the process.

or

By signing up, I agree to Apollo's Terms of Service and Privacy Policy.

Continue Learning

Explore these handpicked resources to deepen your understanding of AI-powered GTM

How Accord Turned Stakeholder Mapping Into a Built-In Sales Motion

What if stakeholder mapping didn’t require hours of manual research? See how Accord uses Apollo to help reps multithread every deal, faster.

How One Company Boosted Trial-to-Paid Conversions by 11% with Dhisana AI and Apollo

Adapts AI had the trial signups, they just didn’t have a clear way to act on them. By pairing Apollo data with Dhisana AI’s GTM agents, they turned that data into timely, targeted outreach.

How Clay and Apollo Help You Move From Data to Deals Faster

New improvements to the Clay + Apollo integration help joint customers enrich data at scale and turn insights into outbound faster.

Sales Leaders: Your Waterfall Enrichment Strategy Is Costing You Deals

Sales leaders are losing deals to bad data. Learn how waterfall enrichment cuts bounce rates, boosts connect rates, and fixes broken outbound pipelines.