How Do I Define Success Metrics for an AI SDR Before Going Live?

Defining success metrics for an AI SDR before going live means setting hard, attributable benchmarks across pipeline, engagement quality, deliverability, and adoption — before you flip the switch. Without a pre-launch baseline, you cannot prove incrementality, and you risk optimizing for vanity activity instead of revenue. Tools like Apollo's AI Sales Assistant are built to execute end-to-end GTM workflows, but even the best tool fails without a measurement framework to validate its impact. Read our guide on AI writing tools for sales to understand what AI SDRs can and cannot do before you set expectations.

Let Apollo Find Your Leads Instantly

Tired of burning hours verifying emails and hunting down contact info? Apollo surfaces accurate, ready-to-use prospects so your team sells instead of searches. Join 600K+ companies building pipeline faster.

Start Free with Apollo →Key Takeaways

- Set a pre-launch baseline and control group so you can prove incremental pipeline impact, not just activity volume.

- Prioritize quality metrics — meeting-to-opportunity rate, pipeline dollars influenced, cost-per-qualified-opportunity — over emails sent or contacts touched.

- Treat deliverability health (bounce rate, spam complaints, opt-outs) as a launch-blocker metric, not an afterthought.

- Include governance KPIs: enablement completion, human-review compliance, and policy adherence are success criteria, not assumptions.

- Define a kill-switch threshold before launch so underperformance triggers action, not debate.

Why Do Most AI SDR Metrics Fail Before Launch?

Most AI SDR rollouts fail at measurement because teams grade AI like a human rep — counting emails sent, calls logged, and meetings booked — without accounting for quality or incrementality. According to TryKondo, 83% of sales teams using AI reported revenue growth in the past year, compared to just 66% of teams without AI. But that correlation only holds when teams measure the right things. The 2026 Copilot adoption debate is a useful parallel: tools with millions of reported users showed weak downstream outcomes because "licenses bought" was the primary KPI. For AI SDRs, the same trap applies — activity output is not a success metric.

What Is the Core AI SDR Metric Tree?

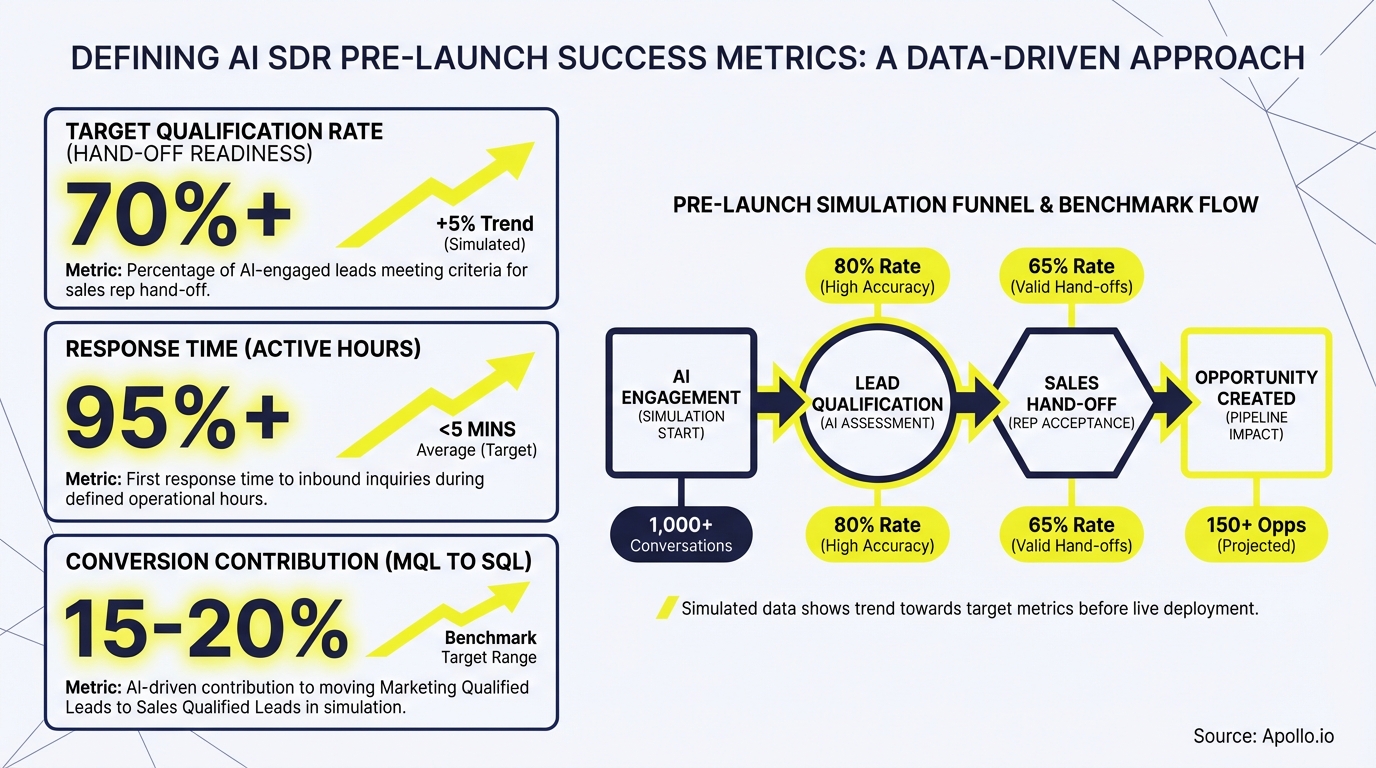

The AI SDR metric tree organizes KPIs into three layers: inputs, leading indicators, and lagging revenue outcomes. Every metric should trace back to pipeline dollars or cost efficiency.

| Layer | Metric | Why It Matters |

|---|---|---|

| Inputs | Contacts enrolled per week, sequences activated, ICP match score | Validates the AI is working the right list |

| Leading Indicators | Email open rate, reply rate, time-to-first-response, meeting set rate, meeting show rate | Early signals of engagement quality before pipeline forms |

| Lagging Outcomes | Meeting-to-opportunity rate, pipeline dollars influenced, cost-per-qualified-opportunity, incremental SQOs vs. control group | The metrics your CFO and CRO actually care about |

| Deliverability Health | Bounce rate, spam complaint rate, opt-out rate, domain reputation score | A launch-blocker — failing thresholds here destroys all other metrics |

Research from SalesHQ shows companies adopting AI see an average 10–15% increase in sales productivity immediately after implementation — but only when measurement is tied to business outcomes, not output volume.

Struggling to build targeted prospect lists for your AI SDR pilot? Search Apollo's 230M+ contacts with 65+ filters to ensure your AI starts with the right ICP data.

How Do SDRs and RevOps Leaders Set a Pre-Launch Baseline?

SDRs and RevOps leaders should capture four weeks of human-rep performance data before activating an AI SDR, across every metric in the tree above. This baseline becomes your control benchmark. Pair it with a holdout group — a matched set of accounts or territories that your AI SDR does not touch — so you can calculate true incrementality rather than seasonal or market-driven lift.

- Matched cohort design: Segment accounts by firmographic similarity (industry, headcount, revenue) and randomly assign half to AI SDR outreach, half to the holdout.

- Attribution window: Define upfront how long after first AI touch an opportunity counts as AI-influenced (typically 30–90 days).

- Seasonality adjustment: If launching near a holiday or fiscal quarter-end, note the timing and plan a second measurement period to normalize results.

For SDR teams managing large account lists, Apollo's Scores Overview shows how AI-generated lead scores can help you segment pilot cohorts by ICP match quality before launch.

Turn Funnel Guesswork Into Pipeline Wins

Pipeline forecasting a guessing game because marketing leads never convert? Apollo surfaces quality prospects already showing buying signals, so your funnel fills with opportunities — not dead ends. 600K+ companies trust it.

Start Free with Apollo →What Governance and Deliverability Metrics Are Go-Live Prerequisites?

Governance and deliverability metrics are go-live prerequisites — not nice-to-haves. Your AI SDR can book meetings or burn your sending domain, depending on whether these guardrails are in place before launch. Poor email deliverability will invalidate every engagement metric downstream.

Go-live readiness checklist:

- Bounce rate below 2% on pilot send volume

- Spam complaint rate below 0.1%

- Opt-out/unsubscribe disclosure language reviewed by legal

- Human-review SLA defined: what percentage of AI-generated messages require rep approval before sending

- Enablement completion: all reps managing AI SDR handoffs trained on escalation protocols

- Policy adherence baseline: CRM logging, data retention, and vendor risk documented

- Kill-switch threshold defined: specific metric floors that trigger pause and review

See Apollo's guidance on why emails land in spam and how to fix it to harden your deliverability before your AI SDR sends its first sequence.

How Do You Build an ROI Attribution Framework for an AI SDR?

An ROI attribution framework for an AI SDR connects inputs to revenue outcomes through a structured measurement chain. According to Salestools.io, businesses investing in AI sales tools can expect revenue increases of up to 15% and sales ROI improvements of 10–20% — but realizing those gains requires a defined attribution model, not self-reported productivity claims.

Simple ROI formula for AI SDR pilots:

- Incremental SQOs: SQOs from AI-touched accounts minus SQOs from holdout accounts (same period)

- Pipeline influenced: Sum of opportunity value where AI SDR had first or assisted touch

- Cost-per-qualified-opportunity: Total AI SDR cost (tool + oversight time) divided by incremental SQOs

- CAC payback benchmark: Compare AI SDR cost-per-SQO to your current human SDR cost-per-SQO

For RevOps leaders managing sales transformation initiatives, Apollo's Outbound Copilot provides credit-cost transparency before each run, making it straightforward to track AI SDR spend against pipeline outcomes in real time.

Need cleaner pipeline data to validate your AI SDR ROI? Build and track your pipeline with Apollo's unified GTM platform so attribution is accurate from day one.

What Early-Warning Signals Should Trigger a Pause or Kill-Switch?

Early-warning signals that should trigger a pause include: reply rates dropping below your pre-launch baseline, spam complaints rising above threshold, meeting show rate declining week-over-week, or AEs flagging low meeting quality. Define these thresholds before launch so underperformance triggers a structured review, not a reactive debate.

- Week 1–2: Monitor deliverability daily. Pause if bounce or complaint rates breach thresholds.

- Week 3–4: Evaluate leading indicators (reply rate, meeting set rate). Adjust ICP filters or messaging if below baseline.

- Week 5–8: Assess lagging outcomes against holdout. If incremental SQOs are flat or negative, escalate to RevOps for root-cause analysis before scaling.

The AI Assistant to Sell Smarter guide covers how Apollo's AI surfaces performance analytics so managers can spot these signals without building custom dashboards. As Harry Gable-Newkirk, Enterprise Sales Development Manager at YipitData, put it: "Apollo's AI Assistant helped me instantly qualify or disqualify accounts using the right signals — saving me at least a full day's work."

How to Define AI SDR Success Metrics Before Going Live: Summary and Next Steps

Defining success metrics for an AI SDR before going live comes down to four commitments: establish a baseline, design a control group, set governance guardrails, and tie every metric to pipeline dollars. Avoid the activity-volume trap. According to Sopro, 63% of organizations using generative AI report productivity and efficiency gains — but those gains compound only when teams measure outcomes, not outputs.

Apollo's AI Sales Assistant gives GTM teams a workflow-native platform to research accounts, build prospect lists, generate personalized sequences, and track performance — all in one place. Consolidating your AI SDR stack into a unified platform (instead of stitching together point tools) makes the attribution work far easier, and the results far more defensible to your CFO.

Ready to launch your AI SDR with a metrics framework your whole revenue team trusts? Get Leads Now and see how Apollo's end-to-end GTM platform makes measurement built-in, not bolted on.

Prove Pipeline ROI Before Next QBR

Budget approval stuck on unclear metrics? Apollo delivers measurable pipeline impact from day one — so you walk into every QBR with hard numbers, not guesses. Join 600K+ companies justifying every dollar spent.

Schedule a Demo →Don't miss these

Data

How to Structure a Marketing Team That Actually Drives Revenue in 2026

Data

Solving Data Synchronization Headaches Across Multiple Business Systems

Data

What Marketing Metrics Should B2B Teams Track in 2026?

See Apollo in action

We'd love to show how Apollo can help you sell better.

By submitting this form, you will receive information, tips, and promotions from Apollo. To learn more, see our Privacy Statement.

4.7/5 based on 9,015 reviews